|

How to adjust the fan thresholds of a Dell Power. Edge. Adjusted lower critical thresholds for the fans of a Power.

It seemed like a simple enough task - Install Windows Server 2003 R2 Standard x64 on a Dell PowerEdge 2950 x64 server that is about a year old. The server.

Edge 2. 80. 0Note: you MUST change your fans against slower, quieter ones to reduce the noise. The threshold adjustment discussed in this article only allows you to do so – without new fans, it’s useless! Intro. In order to swap the fans on a Dell Power. Edge with slower, more quiet ones you have to adjust the lower critical threshold (LCR). If you don’t, the server’s firmware actually lowers the fan’s speed under it’s own LCR, panics, spins them back up a 1. Very noisy, very annoying.

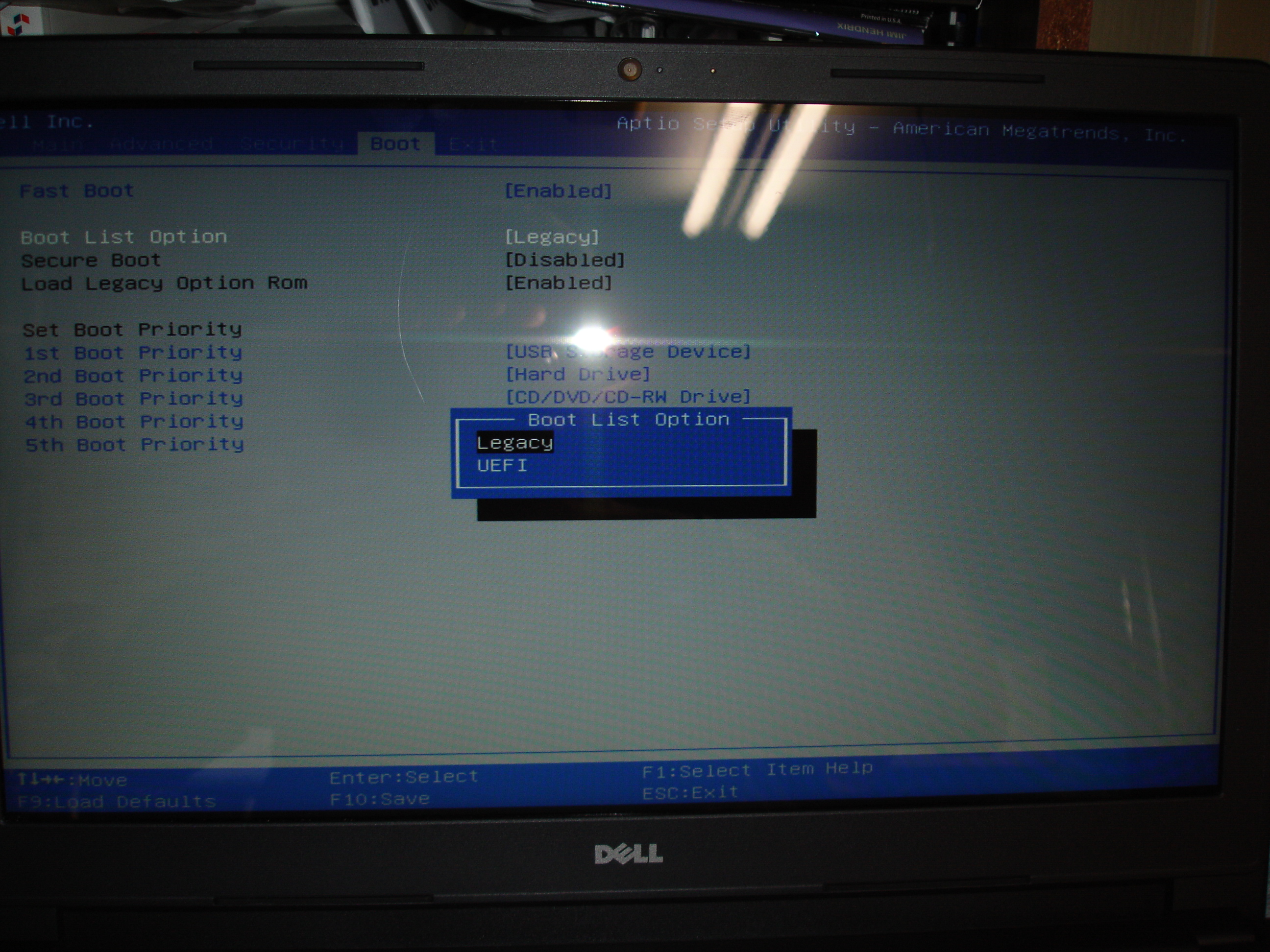

Previous, related posts: This behavior is controlled by the BMC, an embedded management controller. You can configure many parameters of the BMC using the IPMI protocol. Unfortunately, the BMC’s firmware of a Dell Power. 10 thoughts on “ How to Add USB 3.0 capability to a 11th Generation Dell Poweredge Server with UASP Support ”. View and Download Dell PowerEdge RAID Controller S100 user manual online. Dell Personal Computer User Manual. PowerEdge RAID Controller S100 Controller pdf manual. · September 8th, 2014 by StorageReview Enterprise Lab Dell PowerEdge 13G R730xd Review. Today Dell announced the specifications and availability information. Edge does not allow to change the thresholds mentioned above. I contacted Dell support, and they refused to change the thresholds for such an old server. So I had no choice but to change them myself. It took me quite a while to isolate the proper setting in the BMC’s firmware, the checksums etc. But I managed, and the server’s running now very quiet with adjusted thresholds. Below, I explain how to adjust these thresholds with a python script I wrote. Note that you’ll need Python 2. In case someone is interested I can also write up how I did it, but this is for another post. Update: I created a project page for my server. The result. First, here’s the result: my Power. Edge 2. 80. 0 with swapped fans and patched fan thresholds. This has been recorded with my laptop, 1. The system is now more silent than my desktop! Prerequisites. I assume that you have a sufficiently recent Linux distribution up and running, with python installed and IPMI set up. If you don’t, have a look at this article that explains how to get a recent Ubuntu version running (without installing anything on your harddisk!). Adjusting the fan thresholds. I assume that you have now Free. IPMI installed, the BMC configured and that you can query the BMC using IPMI. Query the sensors. First, you have to query the sensors of your server using IPMI. The output should look a bit like this: you@server$ ipmi- sensors. Temp (Temperature): NA (NA/1. NA]2: Temp (Temperature): NA (NA/1. NA]3: Ambient Temp (Temperature): NA (3. NA]4: Planar Temp (Temperature): NA (3. NA]5: Riser Temp (Temperature): NA (3. NA]6: Temp (Temperature): NA (NA/NA): [NA]7: Temp (Temperature): NA (NA/NA): [NA]8: Temp (Temperature): 7. C (NA/1. 25. 0. 0): [OK]9: Temp (Temperature): NA (NA/1. NA]1. 0: Ambient Temp (Temperature): 2. C (3. 0. 0/4. 7. 0. OK]1. 1: Planar Temp (Temperature): 4. C (3. 0. 0/7. 2. 0. OK]1. 2: Riser Temp (Temperature): 5. C (3. 0. 0/6. 2. 0. OK]1. 3: Temp (Temperature): NA (NA/NA): [NA]1. Temp (Temperature): NA (NA/NA): [NA]1. CMOS Battery (Voltage): NA (2. NA): [NA]1. 6: ROMB Battery (Voltage): [NA]1. VCORE (Voltage): [State Deasserted]1. VCORE (Voltage): [NA]1. PROC VTT (Voltage): [State Deasserted]2. V PG (Voltage): [State Deasserted]2. V PG (Voltage): [State Deasserted]2. V PG (Voltage): [State Deasserted]2. V PG (Voltage): [State Deasserted]2. V Riser PG (Voltage): [State Deasserted]2. Riser PG (Voltage): [State Deasserted]2. CMOS Battery (Voltage): 3. V (2. 6. 4/NA): [OK]2. Presence (Entity Presence): [Entity Present]2. Presence (Entity Presence): [Entity Absent]2. Presence (Entity Presence): [Entity Present]3. Presence (Entity Presence): [Entity Absent]3. ROMB Presence (Entity Presence): [Entity Present]3. FAN 1 RPM (Fan): NA (1. NA): [NA]3. 3: FAN 2 RPM (Fan): NA (1. NA): [NA]3. 4: FAN 3 RPM (Fan): NA (1. NA): [NA]3. 5: FAN 4 RPM (Fan): NA (1. NA): [NA]3. 6: FAN 5 RPM (Fan): NA (1. NA): [NA]3. 7: FAN 6 RPM (Fan): NA (1. NA): [NA]3. 8: FAN 1 RPM (Fan): NA (2. NA): [NA]3. 9: FAN 2 RPM (Fan): NA (2. NA): [NA]4. 0: FAN 3 RPM (Fan): 4. RPM (2. 02. 5. 0. NA): [OK]4. 1: FAN 4 RPM (Fan): 4. RPM (2. 02. 5. 0. NA): [OK]4. 2: FAN 5 RPM (Fan): 1. RPM (9. 00. 0. 0/NA): [OK]4. FAN 6 RPM (Fan): 1. RPM (9. 00. 0. 0/NA): [OK]4. FAN 7 RPM (Fan): 1. RPM (9. 00. 0. 0/NA): [OK]4. FAN 8 RPM (Fan): 1. RPM (9. 00. 0. 0/NA): [OK]4. Status (Processor): [Processor Presence detected]4. Status (Processor): [NA]4. Status (Power Supply): [Presence detected]4. Status (Power Supply): [NA]5. VRM (Power Supply): [Presence detected]5. VRM (Power Supply): [Presence detected]5. OS Watchdog (Watchdog 2): [OK]5. SEL (Event Logging Disabled): [Unknown]5. Intrusion (Physical Security): [OK]5. PS Redundancy (Power Supply): [NA]5. Fan Redundancy (Fan): [Fully Redundant]7. SCSI Connector A (Cable/Interconnect): [NA]7. SCSI Connector B (Cable/Interconnect): [NA]7. SCSI Connector A (Cable/Interconnect): [NA]7. Drive (Slot/Connector): [NA]7. Drive (Slot/Connector): [NA]7. Drive (Slot/Connector): [NA]7. Secondary (Module/Board): [NA]8. ECC Corr Err (Memory): [Unknown]8. ECC Uncorr Err (Memory): [Unknown]8. I/O Channel Chk (Critical Interrupt): [Unknown]8. PCI Parity Err (Critical Interrupt): [Unknown]8. PCI System Err (Critical Interrupt): [Unknown]8. SBE Log Disabled (Event Logging Disabled): [Unknown]8. Logging Disabled (Event Logging Disabled): [Unknown]8. Unknown (System Event): [Unknown]8. CPU Protocol Err (Processor): [Unknown]8. CPU Bus PERR (Processor): [Unknown]9. CPU Init Err (Processor): [Unknown]9. CPU Machine Chk (Processor): [Unknown]9. Memory Spared (Memory): [Unknown]9. Memory Mirrored (Memory): [Unknown]9. Memory RAID (Memory): [Unknown]9. Memory Added (Memory): [Unknown]9. Memory Removed (Memory): [Unknown]9. PCIE Fatal Err (Critical Interrupt): [Unknown]9. Chipset Err (Critical Interrupt): [Unknown]9. Err Reg Pointer (OEM Reserved): [Unknown]You have to note the part about the fans (d’oh). Record sensor numbers, fan names and thresholds (the value in brackets). You’ll need it later to identify your system. Download the latest BMC firmware. Got to http: //support. BMC firmware for your system. Select any Linux OS; the BMC firmware should be listed under something like Embedded Server Management. On the download page, select the . BIN package. In my case the file was called BMC_FRMW_LX_R2. BIN. Download it! Fix and extract . BIN package. In my case the . BIN package did not properly work. I had to fix it first, and then extract it. For this, open a terminal and go to the folder you’ve downloaded the package to. Then execute: you@server$ sed- i's/#!\/bin\/sh/#!\/bin\/bash/' BMC_FRMW_LX_R2. BIN # fix interpreter bugyou@server$ chmod. BMC_FRMW_LX_R2. 23. BIN # make executableyou@server$ sudomkdir bmc_firmware # create dir as rootyou@server$ sudo ./BMC_FRMW_LX_R2. BIN - -extract bmc_firmware # yes, you have to do this as root! This should extract your firmware. Check that you have a file called extracted/payload/bmcflsh. If not, game over, your system isn’t compatible. If yes, yay! Patch firmware. Next, download the program I wrote for patching the firmware. Then, use the program on the firmware as shown below: you@server$ wget https: //raw. The program is a python (version > = 2. Yes, there can be support for multiple systems in a single firmware. You recorded the fan values before? Now you know why: you have to use it to identify your system from the ones the script shows to you. Just use the number of fans, their names and thresholds to identify your system. Maybe you’re lucky and the system name has already been found and is directly displayed. In the next step you can select fans and change their threshold. Just remember that the result is a multiple of 7. Half the usual speed has proven to be a good value. I’ve never tested what happened if you set it to 0, but this would be quite stupid as you can’t detect broken fans. If the program display a code at the end and asks you to report back, please do so! That way we can identify the other systems using their code (for example, the code of a Power. Edge 2. 80. 0 is “K_C”). Flash firmware. Finally, flash the firmware like as shown below. Disclaimer: I am not responsible for any damage you do to your system! If you flash this firmware, you might render your Power. Edge server unusable. It might even be unrecoverable. Additionally, badly set thresholds might cause overheating. Additionally, use the usual caution when flashing (do not interrrupt power, do not flash other a network link, do not be stupid). LD_LIBRARY_PATH=./hapi/opt/dell/dup/lib: $LD_LIBRARY_PATH ./bmcfl. Cross your fingers.The flasher should accept the firmware.If not and it complains about the CRC, something went wrong. K Lite Codec Pack 985 Full Throttle here. Windows Server 2. Last week, Microsoft announced the final release of Windows Server 2. In addition, Microsoft has announced that Windows Server 2. I can now publish the setup of my lab configuration which is almost a production platform. Only SSD are not enterprise grade and one Xeon is missing per server. But to show you how it is easy to implement a hyperconverged solution it is fine. In this topic, I will show you how to deploy a 2- node hyperconverged cluster from the beginning with Windows Server 2. But before running some Power. Shell cmdlet, let’s take a look on the design. Design overview. In this part I’ll talk about the implemented hardware and how are connected both nodes. Then I’ll introduce the network design and the required software implementation. Hardware consideration. First of all, it is necessary to present you the design. I have bought two nodes that I have built myself. Both nodes are not provided by a manufacturer. Below you can find the hardware that I have implemented in each node: CPU: Xeon 2. Motherboard: Asus Z9. PA- U8 with ASMB6- i. KVM for KVM- over- Internet (Baseboard Management Controller)PSU: Fortron 3. W FSP FSP3. 50- 6. GHCCase: Dexlan 4. U IPC- E4. 50. RAM: 1. GB DDR3 registered ECCStorage devices: 1x Intel SSD 5. GB for the Operating System. Samsung NVMe SSD 9. Pro 2. 56. GB (Storage Spaces Direct cache)4x Samsung SATA SSD 8. EVO 5. 00. GB (Storage Spaces Direct capacity)Network Adapters: 1x Intel 8. L 1. GB for VM workloads (two controllers). Integrated to motherboard. Mellanox Connectx. Pro 1. 0GB for storage and live- migration workloads (two controllers). Mellanox are connected with two passive copper cables with SFP provided by Mellanox. Switch Ubiquiti ES- 2. Lite 1. GBIf I were in production, I’d replace SSD by enterprise grade SSD and I’d add a NVMe SSD for the caching. To finish I’d buy server with two Xeon. Below you can find the hardware implementation. Network design. To support this configuration, I have created five network subnets: Management network: 1. VID 1. 0 (Native VLAN). This network is used for Active Directory, management through RDS or Power. Shell and so on. Fabric VMs will be also connected to this subnet. DMZ network: 1. 0. VID 1. 1. This network is used by DMZ VMs as web servers, AD FS etc. Cluster network: 1. VID 1. 00. This is the cluster heart beating network. Storage. 01 network: 1. VID 1. 01. This is the first storage network. It is used for SMB 3. Live- Migration. Storage. VID 1. 02. This is the second storage network. It is used for SMB 3. Live- Migration. I can’t leverage Simplified SMB Multi. Channel because I don’t have a 1. GB switch. So each 1. GB controller must belong to separate subnets. I will deploy a Switch Embedded Teaming for 1. GB network adapters. I will not implement a Switch Embedded Teaming for 1. GB because a switch is missing. Logical design. I will have two nodes called pyhyv. Physical Hyper- V). The first challenge concerns the failover cluster. Because I have no other physical server, the domain controllers will be virtual. I implement domain controllers VM in the cluster, how can start the cluster? So the DC VMs must not be in the cluster and must be stored locally. To support high availability, both nodes will host a domain controller locally in the system volume (C: \). In this way, the node boot, the DC VM start and then the failover cluster can start. Both nodes are deployed in core mode because I really don’t like graphical user interface for hypervisors. I don’t deploy the Nano Server because I don’t like the Current Branch for Business model for Hyper- V and storage usage. The following feature will be deployed for both nodes: Hyper- V + Power. Shell management tools. Failover Cluster + Power. Shell management tools. Storage Replica (this is optional, only if you need the storage replica feature)The storage configuration will be easy: I’ll create a unique Storage Pool with all SATA and NVMe SSD. Then I will create two Cluster Shared Volumes that will be distributed across both nodes. The CSV will be called CSV- 0. CSV- 0. 2. Operating system configuration. I show how to configure a single node. You have to repeat these operations for the second node in the same way. This is why I recommend you to make a script with the commands: the script will help to avoid human errors. Bios configuration. The bios may change regarding the manufacturer and the motherboard. But I always do the same things in each server: Check if the server boot in UEFIEnable virtualization technologies as VT- d, VT- x, SLAT and so on. Configure the server in high performance (in order that CPUs have the maximum frequency available)Enable Hyper. Gute Online Games Zum Downloaden Kostenlos . Threading. Disable all unwanted hardware (audio card, serial/com port and so on)Disable PXE boot on unwanted network adapters to speed up the boot of the server.Set the date/time. Next I check if the memory is seen, and all storage devices are plugged. When I have time, I run a memtest on server to validate hardware. OS first settings. I have deployed my nodes from a USB stick configured with Easy. Boot. Once the system is installed, I have deployed drivers for motherboard and for Mellanox network adapters. Because I can’t connect with a remote MMC to Device Manager, I use the following commands to list if drivers are installed. Win. 32_System. Driver | select name,@{n="version"; e={(gi $_. Version. Info. File. Version}}. gwmi Win. Pn. PSigned. Driver | select devicename,driverversion. After all drivers are installed, I configure the server name, the updates, the remote connection and so on. For this, I use sconfig. This tool is easy, but don’t provide automation. You can do the same thing with Power. Shell cmdlet, but I have only two nodes to deploy and I find this easier. All you have to do, is to move in menu and set parameters. Here I have changed the computer name, I have enabled the remote desktop and I have downloaded and installed all updates. I heavily recommend you to install all updates before deploying the Storage Spaces Direct. Then I configure the power options to “performance” by using the bellow cmdlet. POWERCFG. EXE /S SCHEME_MIN. Once the configuration is finished, you can install the required roles and features. You can run the following cmdlet on both nodes. Install- Windows. Feature Hyper- V, Data- Center- Bridging, Failover- Clustering, RSAT- Clustering- Powershell, Hyper- V- Power. Shell, Storage- Replica. Once you have run this cmdlet the following roles and features are deployed: Hyper- V + Power. Shell module. Datacenter Bridging. Failover Clustering + Power. Shell module. Storage Replica. Network settings. Once the OS configuration is finished, you can configure the network. First, I rename network adapters as below. Name - notlike v. Ethernet* |? Interface. Description - like Mellanox*#2 | Rename- Net. Adapter - New. Name Storage- 1. Name - notlike v. Ethernet* |? Interface. Description - like Mellanox*Adapter | Rename- Net. Adapter - New. Name Storage- 1. Name - notlike v. Ethernet* |? Interface. Description - like Intel*#2 | Rename- Net. Adapter - New. Name Management. Name - notlike v. Ethernet* |? Interface. Description - like Intel*Connection | Rename- Net. Adapter - New. Name Management. Next I create the Switch Embedded Teaming with both 1. GB network adapters called SW- 1. G. New- VMSwitch - Name SW- 1. G - Net. Adapter. Name Management. 01- 0, Management. Enable. Embedded. Teaming $True - Allow. Management. OS $False. Now we can create two virtual network adapters for the management and the heartbeat. Add- VMNetwork. Adapter - Switch. Name SW- 1. G - Management. OS - Name Management- 0. Add- VMNetwork. Adapter - Switch. Name SW- 1. G - Management. OS - Name Cluster- 1. Then I configure VLAN on v. NIC and on storage NIC. Set- VMNetwork. Adapter. VLAN - Management. OS - VMNetwork. Adapter. Name Cluster- 1. 00 - Access - Vlan. Id 1. 00. Set- Net. Adapter - Name Storage- 1. Vlan. ID 1. 01 - Confirm: $False. Set- Net. Adapter - Name Storage- 1. Vlan. ID 1. 02 - Confirm: $False. Below screenshot shows the VLAN configuration on physical and virtual adapters. Next I disable VM queue (VMQ) on 1. GB network adapters and I set it on 1. GB network adapters. When I set the VMQ, I use multiple of 2 because hyperthreading is enabled. I start with a base processor number of 2 because it is recommended to leave the first core (core 0) for other processes. Disable- Net. Adapter. VMQ - Name Management*. Core 1, 2 & 3 will be used for network traffic on Storage- 1. Set- Net. Adapter. RSS Storage- 1. 01 - Base. Processor. Number 2 - Max. Processors 2 - Max.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed